Cloudflare Outage November 18 2025

At 11:48 UTC on November 18, 2025, internet infrastructure giant Cloudflare experienced a catastrophic global outage that brought down some of the world’s most critical digital services. Within minutes, OpenAI’s ChatGPT became unreachable, X (formerly Twitter) displayed error messages, and thousands of websites across the globe went dark. The incident exposed a fundamental vulnerability in the architecture of the modern internet: the concentration of critical infrastructure in the hands of a few providers.

The outage lasted approximately three hours at its peak, affecting an estimated 20% of all websites globally. According to Cloudflare’s own status page, the company reported “widespread 500 errors” with both its dashboard and API failing simultaneously. For millions of users worldwide, the internet effectively stopped working.

Understanding the Scale of the Cloudflare Outage

Cloudflare operates one of the world’s largest content delivery networks (CDN), serving as the backbone for millions of websites and applications. The company routes over 70 million HTTP requests per second at peak times through more than 330 data centers spanning 120 countries. When this infrastructure fails, the cascading impact reverberates across the entire digital economy.

The November 18 outage began at approximately 6:20 AM Eastern Time when Cloudflare detected what a company spokesperson described as “a spike in unusual traffic” to one of its core services. Within minutes, users attempting to access websites protected by Cloudflare received HTTP 500 Internal Server Error messages, indicating server-side failures beyond their control.

Downdetector, the internet’s primary outage monitoring service, recorded over 12,000 reports for X within the first hour. The irony was not lost on users: Downdetector itself relies on Cloudflare infrastructure and became partially inaccessible during the incident. This circular dependency highlighted how deeply Cloudflare has embedded itself into the internet’s operational fabric.

Major Services Impacted by the Cloudflare Failure

OpenAI and ChatGPT: AI Services Grind to a Halt

OpenAI’s suite of artificial intelligence services, including ChatGPT, DALL-E, and the company’s developer API, experienced complete or partial outages throughout the morning. OpenAI’s status page acknowledged the disruption, stating: “We are experiencing issues due to an outage with one of our third-party service providers.”

The dependence of AI services on CDN infrastructure reveals an often-overlooked aspect of modern machine learning deployments. While OpenAI’s models run on dedicated compute clusters, the web interfaces, API gateways, and authentication systems that users interact with flow through Cloudflare’s network. When this intermediary layer fails, even the most powerful AI systems become inaccessible.

ChatGPT users worldwide reported seeing error messages reading “Please unblock challenges.cloudflare.com to proceed” when attempting to access the service. This specific error indicated that Cloudflare’s bot protection and challenge system, which verifies human users before granting access to websites, had failed. Without this verification layer functioning, OpenAI’s systems could not distinguish legitimate users from automated bots, forcing the entire service offline as a security precaution.

The outage particularly impacted enterprise customers who integrate OpenAI’s API into their business applications. One financial services firm reported that their customer service chatbot, powered by GPT-4, became completely non-functional for over two hours, forcing them to route all customer inquiries to human agents. The resulting backlog took more than six hours to clear after services resumed.

X (Twitter): Social Media’s Achilles Heel

X, Elon Musk’s social media platform, experienced severe disruptions that prevented users from loading timelines, posting content, or accessing direct messages. The platform’s reliance on Cloudflare for DDoS protection, content delivery, and traffic management meant that when Cloudflare failed, X’s infrastructure became vulnerable and largely inaccessible.

Peak outage reports for X reached 12,374 simultaneous complaints on Downdetector, with users across North America, Europe, and Asia reporting identical error messages. The geographic distribution of reports confirmed that this was not a localized network issue but a global infrastructure failure affecting Cloudflare’s entire network.

The timing proved particularly unfortunate as major news events unfolded simultaneously, including market volatility following economic data releases and breaking political developments. Social media analysts noted that during critical news moments, alternative platforms like Bluesky and Mastodon experienced unprecedented traffic spikes as users sought functioning alternatives.

Creative Platforms and Productivity Tools

Design platform Canva became completely inaccessible, stranding millions of users in the middle of active projects. With over 100 million monthly active users relying on Canva for everything from social media graphics to business presentations, the outage disrupted workflows for creative professionals, marketers, and educators worldwide.

Music streaming service Spotify experienced intermittent failures, with users unable to access playlists or stream music. The outage affected both the web player and mobile applications, as authentication and content delivery both route through Cloudflare infrastructure.

Popular film logging service Letterboxd, game wikis for titles including League of Legends and RuneScape, and productivity tools like the design collaboration platform all reported simultaneous failures. The diversity of affected services underscored how Cloudflare’s infrastructure supports not just major tech platforms but also specialized communities and niche services.

Transportation and Critical Infrastructure

The outage extended beyond entertainment and social media into critical infrastructure. Public transportation apps, including New Jersey Transit’s mobile ticketing system, displayed error messages preventing users from purchasing tickets. This created confusion at stations and raised questions about the wisdom of relying on cloud-dependent systems for essential public services.

News organizations found themselves unable to update their websites as content management systems failed. Even some government services experienced disruptions, though most critical emergency services maintain redundant systems that operated independently of Cloudflare.

Technical Analysis: What Caused the Cloudflare Outage?

The Role of CDN Infrastructure in Modern Internet Architecture

Understanding the November 18 outage requires examining how content delivery networks function and why they have become indispensable to modern internet operations. A CDN is a geographically distributed network of servers that cache content close to end users, dramatically reducing latency and improving performance.

Cloudflare’s CDN operates as a reverse proxy between users and origin servers. When a user requests content from a website using Cloudflare, the request first hits one of Cloudflare’s edge servers located near the user. If the edge server has the requested content cached, it serves the content directly. If not, the edge server queries the origin server, caches the response, and delivers it to the user.

This architecture provides multiple benefits. Websites load faster because content comes from nearby servers rather than distant origin servers. Origin servers handle less traffic because Cloudflare absorbs the load. DDoS attacks get filtered before reaching origin infrastructure. Security threats get blocked at the network edge.

However, this architecture creates a single point of failure. When Cloudflare’s edge network experiences problems, every website behind it becomes inaccessible, regardless of whether their origin servers remain healthy. The November 18 outage demonstrated this vulnerability at global scale.

Unusual Traffic Spike and System Degradation

Cloudflare’s official statement mentioned detecting “a spike in unusual traffic” around 6:20 AM ET, shortly before the outage began. While the company has not released detailed technical information about the root cause, this description suggests several possible scenarios.

One possibility involves a distributed denial-of-service (DDoS) attack overwhelming Cloudflare’s infrastructure. However, Cloudflare typically handles massive DDoS attacks as part of its core service offering. The company claims to have blocked over 33 trillion threats monthly across its network. An attack large enough to overwhelm Cloudflare’s entire global infrastructure would represent an unprecedented escalation in cyber warfare.

More likely scenarios involve internal configuration errors or cascading failures within Cloudflare’s systems. The company had scheduled maintenance at multiple data centers on November 18, including facilities in Atlanta, Chicago, Miami, Buenos Aires, and Santiago. While Cloudflare has not confirmed a connection, industry experts speculate that a configuration change during maintenance may have propagated across the network, triggering the global outage.

Network engineers familiar with large-scale infrastructure note that Anycast routing, which Cloudflare uses to automatically direct users to the nearest healthy data center, can sometimes create unexpected problems. If a routing configuration error causes Anycast to direct traffic incorrectly, the entire network can experience cascading failures as traffic floods inappropriate data centers.

The HTTP 500 Error Explained

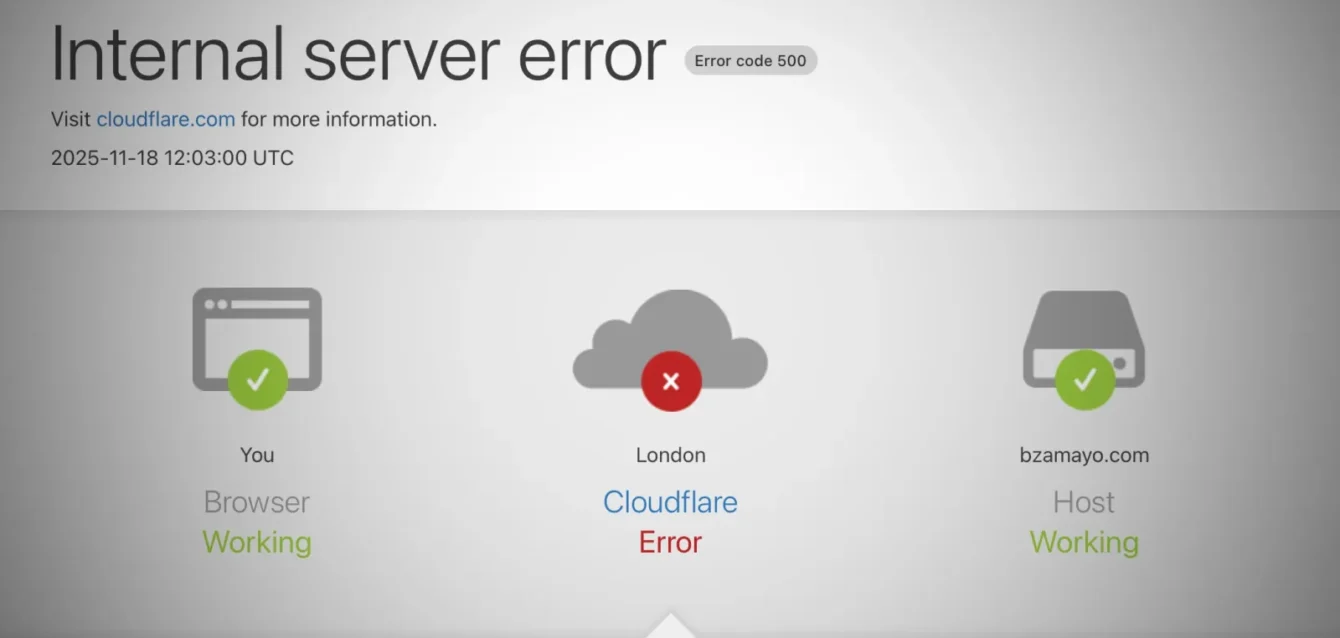

Users encountering the outage saw HTTP 500 Internal Server Error messages, which indicate problems on the server side rather than client-side issues. Unlike 404 errors (content not found) or 403 errors (access forbidden), 500 errors mean the server encountered an unexpected condition preventing it from fulfilling the request.

In Cloudflare’s case, the 500 errors originated from failures in core services including the dashboard API, authentication systems, and request routing infrastructure. When these fundamental components fail, edge servers cannot properly process requests, triggering 500 errors for all traffic attempting to pass through the network.

The widespread nature of the 500 errors, affecting both Cloudflare’s own dashboard and customer websites, indicated that the failure occurred at a foundational level within the company’s infrastructure stack. This suggests problems with databases, load balancers, or core networking components rather than isolated application failures.

The Single Point of Failure Problem in Internet Infrastructure

The November 18 Cloudflare outage starkly illustrated a growing concern among infrastructure experts: the internet has become dangerously centralized. A handful of companies, including Cloudflare, Amazon Web Services, and Microsoft Azure, now control critical infrastructure supporting millions of websites and services.

Graeme Stewart, head of public sector at cybersecurity firm Check Point, commented on the outage: “During today’s outage, news sites, payments, public information pages and community services all froze. That was not because each organization failed on its own. It was because a single layer they all rely on stopped responding.”

This concentration of infrastructure creates systemic risk. When one provider experiences problems, vast swaths of the internet become inaccessible simultaneously. The economic impact extends far beyond the immediate inconvenience to users. E-commerce sites lose sales, productivity tools become unavailable, and critical communications channels go dark.

Recent Pattern of Major Infrastructure Outages

The Cloudflare incident represents the third major infrastructure failure in less than a month, following significant outages at Amazon Web Services in October and Microsoft Azure shortly afterward. This clustering of incidents raises questions about whether infrastructure providers have adequately invested in redundancy and resilience as they scale to serve increasing percentages of internet traffic.

The October AWS outage affected services including Snapchat, Signal, and numerous financial platforms. Microsoft’s Azure failure impacted Office 365, Outlook, and Xbox Live services. When combined with the November 18 Cloudflare outage, these incidents demonstrate that no provider, regardless of size or resources, remains immune to catastrophic failures.

Industry analysts note that scheduled maintenance, which typically happens seamlessly, appears to be triggering unexpected problems across multiple providers. As systems grow more complex and interdependent, the blast radius of configuration errors expands. What might once have caused localized issues now cascades globally within minutes.

Enterprise Risk and Multi-CDN Strategies

Forward-thinking enterprises have begun implementing multi-CDN strategies to reduce dependence on single providers. This approach routes traffic through multiple CDN providers simultaneously or maintains hot standby arrangements that automatically fail over when the primary provider experiences issues.

Implementing multi-CDN architecture requires significant engineering investment. Organizations must configure DNS-based failover with health checks that detect outages within seconds and automatically redirect traffic. Tools like Cloudflare’s own Load Balancing with traffic steering, Fastly, Akamai, or AWS CloudFront provide alternatives that can serve as backup CDN providers.

The financial calculus of multi-CDN strategies depends on the cost of downtime. An e-commerce site processing $10 million monthly through Cloudflare risks $300,000-$500,000 per hour during complete outages when factoring cart abandonment, lost sales, and reputational damage. For such organizations, the additional cost of maintaining backup CDN capacity becomes justified insurance.

However, smaller organizations often lack the resources to implement sophisticated failover systems. For the millions of websites operated by small businesses, non-profits, and individual creators, single-provider dependence remains the norm. This creates a two-tier internet where well-resourced enterprises can architect around single points of failure while everyone else remains vulnerable.

Cloudflare’s Response and Recovery Timeline

Initial Detection and Public Communication

Cloudflare’s incident response team posted the first public acknowledgment at 11:48 UTC, approximately 30 minutes after the outage began. The company’s status page displayed “Identified – The issue has been identified and a fix is being implemented” at 13:09 UTC.

Industry best practices for incident management emphasize transparent, frequent communication during outages. Cloudflare adhered to these practices, providing updates every 15-30 minutes throughout the incident. However, the updates remained vague about root causes, focusing on mitigation efforts rather than technical details.

At 13:13 UTC, Cloudflare announced partial recovery: “We have made changes that have allowed Cloudflare Access and WARP to recover. Error levels for Access and WARP users have returned to pre-incident rates.” This indicated that the company was restoring services incrementally rather than attempting a single, comprehensive fix.

Temporary Service Degradations During Recovery

As part of recovery efforts, Cloudflare temporarily disabled WARP access in London, the company’s VPN-like service that provides secure internet connectivity. Users attempting to connect through WARP in London saw connection failures while engineers worked to restore broader services.

This decision exemplified the difficult tradeoffs facing incident response teams during major outages. Disabling certain services reduced load on struggling systems, potentially accelerating overall recovery. However, it also extended outages for users dependent on those specific services.

By 14:00 UTC, Cloudflare reported that most services had returned to normal operation, though the company warned that “customers may continue to observe higher-than-normal error rates as we continue remediation efforts.” This cautious messaging reflected the reality that recovering from major infrastructure failures rarely happens instantaneously; systems often require hours to fully stabilize even after the root cause is addressed.

Post-Incident Analysis and Transparency

At the time of this writing, Cloudflare has not yet published a detailed post-incident report explaining the technical root cause of the November 18 outage. The company typically releases such reports within 48-72 hours of major incidents, providing transparency about what went wrong and what steps the company is taking to prevent recurrence.

Previous Cloudflare post-mortems have set a high standard for technical transparency, often including detailed explanations of configuration errors, software bugs, or architectural weaknesses that contributed to outages. Engineers across the industry study these reports to learn from Cloudflare’s experiences and improve their own systems.

The delay in releasing detailed technical information about the November 18 incident may indicate that the root cause investigation remains ongoing. Complex infrastructure failures often involve multiple contributing factors that require extensive analysis to fully understand. Premature conclusions can lead to ineffective remediation strategies that fail to address underlying issues.

Impact on AI Services and Machine Learning Infrastructure

The disruption to OpenAI’s services highlighted an often-overlooked dependency in modern AI infrastructure. While much attention focuses on the computational requirements for training and running large language models, the infrastructure surrounding these models—API gateways, authentication systems, content delivery networks—proves equally critical to service availability.

OpenAI’s architecture routes user requests through Cloudflare for DDoS protection, bot detection, and global content delivery. When a user sends a prompt to ChatGPT, the request first hits Cloudflare’s edge network, where security checks verify that the user is human and not an automated bot. Only after passing these checks does the request forward to OpenAI’s infrastructure for processing.

This architecture makes sense from a security and performance perspective. AI services face constant attacks from malicious actors attempting to abuse free tiers, extract proprietary information, or simply disrupt service availability. Cloudflare’s bot detection and DDoS protection provide essential defenses.

However, the dependency creates vulnerability. When Cloudflare fails, even though OpenAI’s underlying AI models and compute clusters remain operational, users cannot access the service. The November 18 outage demonstrated that AI service reliability depends not just on model quality and compute capacity but also on the entire stack of infrastructure enabling users to reach those capabilities.

Implications for Enterprise AI Deployments

Organizations building AI-powered applications face similar dependencies. Most AI services, whether provided by OpenAI, Anthropic, Google, or other vendors, route traffic through CDN providers for performance and security. When these intermediary layers fail, AI-dependent business processes stop functioning.

For customer service chatbots handling thousands of simultaneous conversations, an outage lasting several hours can create massive backlogs. Financial services using AI for fraud detection must either route transactions through backup systems with potentially different risk profiles or delay transaction processing until AI services recover.

Healthcare applications using AI for diagnostic support face even more critical decisions during outages. While no responsible healthcare provider relies solely on AI for diagnosis, interruptions to AI support tools can slow clinical workflows and potentially impact patient care quality.

These dependencies argue for diversified AI infrastructure strategies. Organizations building mission-critical AI applications should consider maintaining relationships with multiple AI providers, implementing local caching of AI responses for common queries, and designing fallback workflows that can operate without AI assistance during outages.

The Economics of Internet Infrastructure Fragility

The November 18 outage imposed significant economic costs across every sector touched by the affected services. Quantifying these costs precisely proves difficult because many impacts remain hidden in lost productivity, delayed projects, and degraded customer experiences that don’t appear in traditional accounting.

Direct Revenue Losses

E-commerce represents the most straightforward economic impact. Websites that became inaccessible during the outage lost every sale that would have occurred during that period. For large e-commerce platforms processing millions of dollars hourly, even brief outages translate to six-figure revenue losses.

The timing of the outage, occurring during morning hours in the United States and early afternoon in Europe, hit peak shopping and business hours in major markets. Weekend outages often impose less economic damage because consumer activity peaks during weekdays.

Beyond lost sales, outages trigger longer-term economic consequences. Studies of previous major internet outages show that customer trust erodes when services prove unreliable. Customers who encounter errors during critical moments often switch to competitors, creating permanent market share losses extending far beyond the immediate outage.

Productivity and Operational Costs

Organizations whose internal tools route through Cloudflare experienced workforce productivity losses. When collaboration tools, internal wikis, or business applications become inaccessible, employees cannot perform their normal duties.

Information technology teams bore additional costs responding to the outage. Help desk staff fielded thousands of support requests from confused users. Network engineers investigated whether the problems originated from local infrastructure or broader internet issues. Executives demanded status updates and contingency plans.

These indirect costs often exceed direct revenue losses. A company with 10,000 employees averaging $50 per hour in compensation loses approximately $500,000 for every hour those employees cannot work productively. For organizations heavily dependent on cloud-based tools, the November 18 outage likely triggered seven-figure productivity losses.

Market Reactions and Stock Price Impacts

Cloudflare’s stock price dropped more than 5% in pre-market trading following news of the outage, reflecting investor concern about reliability and potential customer attrition. While the stock partially recovered as services came back online, the incident reminded investors that infrastructure companies face operational risks that can rapidly destroy shareholder value.

The stock price impact extends beyond Cloudflare itself. Publicly traded companies providing competing CDN services saw modest gains as analysts speculated about potential customer migration. Cloud infrastructure providers positioning themselves as more reliable alternatives received increased investor attention.

Financial markets have become increasingly sensitive to major technology infrastructure failures. The clustering of AWS, Azure, and Cloudflare outages within a month reinforced concerns that internet infrastructure has not scaled resilience investments to match growth in traffic and usage.

Lessons for Building Resilient Internet Architecture

The November 18 Cloudflare outage provides valuable lessons for infrastructure architects, business leaders, and policymakers concerned about internet resilience. While no system can achieve perfect reliability, thoughtful design choices can dramatically reduce both the frequency and impact of failures.

Implementing Geographic and Provider Redundancy

The most effective protection against single-provider failures involves maintaining capacity with multiple providers. Organizations serious about resilience should route traffic through at least two independent CDN providers, with automatic failover configured to redirect users within seconds of detecting problems.

DNS-based failover provides the foundation for multi-provider strategies. Organizations configure health checks that continuously monitor CDN availability from multiple global vantage points. When health checks detect failures, DNS records update to point traffic toward backup providers.

Setting appropriate DNS Time-to-Live (TTL) values proves critical. Lower TTLs (under 5 minutes) enable faster failover but increase DNS query load. Higher TTLs improve performance and reduce DNS costs but extend outage duration because cached DNS records take longer to expire.

Caching Strategies and Offline Resilience

Aggressive edge caching reduces dependency on backend infrastructure during outages. By serving cached content for extended periods, organizations can maintain partial functionality even when origin servers or CDN providers fail.

Implementing stale-while-revalidate HTTP headers allows browsers and edge caches to serve slightly outdated content while attempting to fetch fresh content in the background. Users see potentially stale information during outages rather than error messages, maintaining basic functionality until services recover.

Progressive Web Apps (PWAs) take offline resilience further by storing application code and data locally on user devices. When backend services fail, PWAs continue functioning using locally cached resources. While this approach cannot enable full functionality for services requiring real-time data processing, it preserves core capabilities during outages.

Separating Critical and Non-Critical Dependencies

Organizations should architect systems to isolate critical workflows from dependencies on external infrastructure. Authentication, payment processing, and core business logic deserve particular attention because failures in these areas completely prevent users from accomplishing their goals.

Running authentication systems through multiple providers or maintaining dedicated authentication infrastructure independent of CDN providers ensures that user login continues functioning even during CDN outages. Payment processing should similarly maintain diverse routing options, as payment failures directly translate to lost revenue.

Non-critical features like analytics, social media integration, or recommendation engines can degrade gracefully during outages without preventing core functionality. Designing systems to continue operating with reduced capability rather than failing completely dramatically improves user experience during infrastructure problems.

Monitoring, Alerting, and Incident Response

Sophisticated monitoring systems detecting problems before they impact users provide crucial advantages during infrastructure failures. Organizations should monitor service availability from multiple geographic locations, testing complete user workflows rather than just basic connectivity.

When monitoring detects problems, automated incident response playbooks can trigger failover to backup systems, notify relevant teams, and begin documentation. The minutes saved through automation often determine whether an outage becomes a minor hiccup or a major crisis.

Incident response practices should emphasize clear communication with affected users. Transparency about known issues, expected resolution timelines, and alternative options builds trust even during difficult situations. The contrast between organizations that communicate effectively during outages and those that remain silent often shapes long-term customer relationships.

The Future of Internet Infrastructure Resilience

The November 18 Cloudflare outage, combined with recent AWS and Azure incidents, signals that internet infrastructure resilience requires renewed attention from technology leaders, regulators, and the open-source community.

Regulatory Approaches to Infrastructure Concentration

Policymakers in multiple jurisdictions have begun examining whether regulatory intervention could improve internet infrastructure resilience. The European Union’s Digital Operational Resilience Act (DORA), which takes effect in 2025, imposes requirements on financial services firms to manage third-party technology dependencies and maintain detailed operational resilience plans.

Similar regulatory frameworks may extend to other critical sectors including healthcare, transportation, and government services. Requirements might include maintaining relationships with multiple infrastructure providers, testing failover procedures regularly, and reporting major dependencies to regulators.

However, regulatory approaches face significant challenges. Technology infrastructure evolves rapidly, and prescriptive regulations risk becoming obsolete before they take effect. Overly burdensome requirements might push smaller organizations toward exactly the concentrated providers that regulators aim to diversify away from, as only large providers can afford compliance costs.

Open Source and Decentralized Alternatives

The concentration of internet infrastructure in the hands of a few providers has renewed interest in decentralized alternatives. IPFS (InterPlanetary File System), a peer-to-peer protocol for storing and sharing data, offers one vision for more resilient content distribution that doesn’t depend on centralized CDN providers.

Blockchain-based infrastructure projects aim to create distributed networks of nodes providing CDN-like functionality without single points of failure. However, these approaches face significant technical challenges around performance, cost, and usability that have prevented mainstream adoption.

More pragmatic approaches involve open-source CDN software that organizations can deploy on their own infrastructure or across multiple cloud providers. Tools like Varnish Cache and NGINX provide production-grade caching and content delivery capabilities that organizations control independently.

Edge Computing and Distributed Architectures

The evolution toward edge computing, where processing occurs closer to users rather than in centralized data centers, may inherently improve resilience. When applications run across thousands of edge locations rather than concentrating in a few data centers, localized failures impact fewer users.

Cloudflare’s Workers platform, AWS Lambda@Edge, and similar services enable developers to deploy code that runs at edge locations worldwide. Applications built on these platforms distribute both compute and data across geographic regions, reducing single points of failure.

However, edge computing introduces new complexity around data consistency, debugging, and operational management. Organizations must balance the resilience benefits of distributed architectures against the increased engineering effort required to operate them reliably.

Industry Collaboration and Standards

Technology companies have begun collaborative efforts to improve infrastructure resilience through shared standards and best practices. The Open Web Application Security Project (OWASP) and similar organizations develop guidelines that help organizations build more resilient systems.

Industry consortiums focused on internet infrastructure reliability could accelerate knowledge sharing about failure modes, mitigation strategies, and operational practices. When infrastructure providers share post-incident reports and collaborate on resilience research, the entire ecosystem benefits.

However, competitive dynamics limit collaboration. Infrastructure providers compete intensely for customers, creating incentives to emphasize their own reliability while highlighting competitors’ failures. Overcoming these competitive instincts to enable genuine collaboration requires both industry leadership and regulatory encouragement.

Practical Steps Organizations Can Take Today

While the structural challenges facing internet infrastructure will take years to address, organizations can implement immediate improvements to reduce vulnerability to CDN outages and similar infrastructure failures.

Audit Infrastructure Dependencies

The first step involves mapping all dependencies on external infrastructure providers. Many organizations lack clear understanding of which critical services route through which providers. Creating dependency maps that trace requests from user browsers through CDN layers, API gateways, authentication services, and backend systems reveals single points of failure.

This audit should identify not just primary dependencies but also hidden dependencies. Authentication might route through Cloudflare even though the main application uses a different CDN. APIs might depend on third-party services that themselves rely on infrastructure providers facing similar reliability challenges.

Test Failover Procedures Regularly

Organizations maintaining backup infrastructure must verify that failover actually works under realistic conditions. Testing should occur during both planned exercises and spontaneous drills that simulate actual outage scenarios.

Effective testing exercises entire workflows rather than just individual components. Can users authenticate, access core functionality, and complete critical transactions using backup systems? Do monitoring systems detect failures quickly enough to trigger automated failover before users notice problems?

Documentation of failover procedures should assume that the people performing recovery actions have never done so before and may be under extreme stress. Step-by-step runbooks with clear ownership and decision-making authority prevent confusion during actual incidents.

Invest in Observability

Comprehensive observability platforms that collect metrics, logs, and traces from across the application stack enable rapid problem diagnosis during outages. When services fail, engineers need to quickly determine whether problems originate from internal systems, infrastructure providers, or other dependencies.

Modern observability platforms like Datadog, New Relic, and Honeycomb provide sophisticated capabilities for correlating issues across distributed systems. However, observability tools themselves often depend on cloud infrastructure that may fail during widespread outages.

Maintaining local copies of critical observability data ensures that engineers can access diagnostic information even when cloud-based platforms become unavailable. Local retention also protects against data loss if observability platforms experience failures during the same incidents affecting other infrastructure.

Develop Incident Communication Plans

Clear communication during outages builds customer trust and reduces support burden. Organizations should prepare status page templates, social media messaging, and customer notification procedures before incidents occur.

Status pages should provide timely, accurate updates without requiring access to infrastructure that may be affected by outages. Using independent status page providers or maintaining static status pages on separate infrastructure ensures customers can access information when primary systems fail.

Internal communication plans should clarify escalation procedures, decision-making authority, and coordination mechanisms. Who decides whether to activate backup systems? Who communicates with customers? Who coordinates with infrastructure providers? Answering these questions during calm periods prevents confusion during crises.

Frequently Asked Questions About the Cloudflare Outage

What caused the Cloudflare outage on November 18, 2025?

Cloudflare reported detecting “a spike in unusual traffic” to one of its core services around 6:20 AM Eastern Time on November 18, 2025. While the company has not released detailed root cause analysis at the time of this writing, the outage resulted in widespread HTTP 500 Internal Server Errors across its global network. The incident coincided with scheduled maintenance at multiple Cloudflare data centers, leading industry experts to speculate that a configuration change during maintenance may have triggered cascading failures. Cloudflare’s infrastructure handles over 70 million requests per second at peak times, and when core services including the dashboard API and routing systems failed, the impact cascaded to approximately 20% of all websites globally.

How long did the Cloudflare outage last?

The Cloudflare outage began at approximately 11:48 UTC (6:48 AM Eastern Time) on November 18, 2025, when the company first publicly acknowledged the issue. Partial recovery began around 13:13 UTC, approximately 85 minutes after the initial acknowledgment, when Cloudflare reported that Access and WARP services had returned to normal error rates. Most services were restored by 14:00 UTC, meaning the peak outage lasted approximately 2 hours and 12 minutes. However, some customers continued experiencing higher-than-normal error rates for several additional hours as systems fully stabilized. The total impact window from initial detection to complete recovery spanned roughly 3-4 hours.

Why did ChatGPT stop working during the Cloudflare outage?

OpenAI’s ChatGPT and other AI services rely on Cloudflare’s infrastructure for critical functions including DDoS protection, bot detection, content delivery, and API gateway services. When users access ChatGPT, their requests first pass through Cloudflare’s edge network, where security systems verify that users are human and not automated bots. During the November 18 outage, these verification systems failed, displaying error messages stating “Please unblock challenges.cloudflare.com to proceed.” Even though OpenAI’s underlying AI models and compute infrastructure remained operational, users could not reach the service because the intermediary Cloudflare layer was down. This dependency highlights how modern AI services depend not just on model quality but on the entire infrastructure stack enabling user access.

Was the Cloudflare outage a cyberattack?

Cloudflare has not indicated that the November 18, 2025 outage resulted from a cyberattack. The company’s statement mentioned detecting “unusual traffic” but did not characterize this as malicious activity. Given that Cloudflare routinely defends against massive DDoS attacks as part of its core business, an attack large enough to overwhelm the entire global network would represent an unprecedented escalation in cyber warfare. More likely scenarios involve internal configuration errors or cascading failures within Cloudflare’s own systems, potentially related to scheduled maintenance activities that were occurring at multiple data centers on the same day. Cloudflare typically publishes detailed post-incident reports within 48-72 hours of major outages, which should provide definitive information about the root cause.

Which websites were affected by the Cloudflare outage?

Major services affected by the November 18, 2025 Cloudflare outage included OpenAI’s ChatGPT, DALL-E, and API services; X (formerly Twitter); Canva design platform serving over 100 million users; Spotify music streaming; Letterboxd film review site; Downdetector outage tracking service; online games including League of Legends and RuneScape; transportation apps like New Jersey Transit mobile ticketing; and thousands of e-commerce websites, news organizations, and business applications. Because Cloudflare provides infrastructure to approximately 20% of all websites globally, the exact list of affected services is extensive and diverse. Any website or application routing traffic through Cloudflare’s CDN, using its security services, or depending on its DNS infrastructure experienced disruptions during the outage.

How much money did companies lose during the Cloudflare outage?

Quantifying the exact economic impact of the November 18, 2025 Cloudflare outage is difficult, but estimates suggest losses in the hundreds of millions of dollars globally. E-commerce sites processing significant daily revenue lost every sale during the outage period. A mid-size e-commerce platform processing $10 million monthly faces potential losses of $300,000-$500,000 per hour of complete outage when factoring in cart abandonment and reputational damage. For large platforms like X, which generates advertising revenue based on user engagement, hours of downtime translate to direct revenue losses. Beyond immediate sales, companies faced productivity losses as employees could not access cloud-based tools, IT departments dealt with support requests, and businesses disrupted operations. Cloudflare’s stock price dropped more than 5% in pre-market trading, reflecting investor concern about reliability and customer attrition.

Can I prevent my website from going down during CDN outages?

Organizations can implement multi-CDN strategies to reduce vulnerability to single provider failures. This approach involves routing traffic through multiple CDN providers simultaneously or maintaining hot standby arrangements that automatically fail over when the primary provider experiences issues. Implementing multi-CDN architecture requires configuring DNS-based failover with health checks that detect outages within seconds and redirect traffic to backup providers like Akamai, Fastly, or AWS CloudFront. Setting DNS Time-to-Live (TTL) values under 5 minutes enables faster failover. Aggressive edge caching with extended cache lifetimes allows websites to serve cached content even during CDN outages. However, these strategies require significant engineering investment and ongoing operational costs that smaller organizations often cannot afford, leaving them vulnerable to single-provider dependencies.

Is Cloudflare reliable for business-critical applications?

Cloudflare operates one of the world’s most reliable content delivery networks, handling peaks above 70 million HTTP requests per second and blocking over 33 trillion threats monthly. However, the November 18, 2025 outage demonstrates that no infrastructure provider achieves perfect reliability. This incident marked the third major infrastructure failure in less than a month, following significant outages at Amazon Web Services and Microsoft Azure. Organizations building business-critical applications should evaluate their risk tolerance and implement appropriate redundancy strategies. For applications where downtime directly translates to significant revenue losses or safety risks, multi-CDN architectures provide essential insurance. For less critical applications, accepting occasional outages in exchange for Cloudflare’s security, performance, and cost benefits may represent an acceptable tradeoff. The key is making informed decisions about infrastructure dependencies rather than assuming any single provider offers perfect reliability.

What is a CDN and why do so many websites use Cloudflare?

A Content Delivery Network (CDN) is a geographically distributed network of servers that caches website content close to end users, dramatically improving performance and reducing load on origin servers. Cloudflare has become one of the most popular CDN providers because it offers a generous free tier, provides integrated DDoS protection and web application firewall services, operates over 330 data centers in 120 countries, and simplifies implementation through DNS-based configuration. When websites use Cloudflare, the CDN sits between users and origin servers as a reverse proxy. User requests hit nearby Cloudflare edge servers first, which either serve cached content immediately or fetch fresh content from origin servers. This architecture reduces latency, absorbs traffic spikes, filters malicious requests, and protects origin servers from attacks. The combination of performance, security, and affordability has made Cloudflare infrastructure essential to approximately 20% of all websites globally.

How did X (Twitter) respond to the Cloudflare outage?

X experienced severe disruptions during the November 18, 2025 Cloudflare outage, with Downdetector recording over 12,000 simultaneous outage reports at peak impact. Users worldwide could not load timelines, post content, or access direct messages. The platform did not issue official statements during the outage, likely because X’s own infrastructure for posting status updates depends on the same Cloudflare services that were down. Elon Musk, who owns X and was also a founder of OpenAI (though the companies have since separated acrimoniously), did not publicly comment on the incident during the outage. The timing proved particularly unfortunate as major news events unfolded simultaneously, including market volatility and breaking political developments. Alternative platforms like Bluesky and Mastodon reported unprecedented traffic spikes as users sought functioning social media alternatives during X’s downtime.

Will there be another Cloudflare outage?

While Cloudflare and other infrastructure providers invest heavily in reliability, complex distributed systems inevitably experience failures. The clustering of major outages at AWS, Azure, and Cloudflare within a single month suggests that internet infrastructure faces increasing strain as traffic volumes grow and systems become more complex. Cloudflare will likely publish a detailed post-incident report explaining the root cause of the November 18, 2025 outage and describing changes implemented to prevent recurrence of the specific failure mode. However, different failure modes will emerge as systems evolve. Organizations concerned about infrastructure reliability should implement monitoring, maintain relationships with multiple providers, test failover procedures regularly, and develop incident response plans that assume outages will eventually occur. The question is not whether infrastructure failures will happen but how well organizations prepare for and respond to inevitable disruptions.

How can I check if Cloudflare is down right now?

To check Cloudflare’s current status, visit the official Cloudflare Status Page, which provides real-time information about ongoing incidents and scheduled maintenance across all services and regions. During major outages, this page itself may become inaccessible, as occurred briefly on November 18, 2025. Alternative monitoring options include Downdetector, which aggregates user reports of outages; social media platforms like Twitter/X where users quickly report problems using hashtags like #CloudflareDown; and third-party monitoring services that test connectivity from multiple global locations. Organizations running business-critical applications should implement their own monitoring using tools like Pingdom, UptimeRobot, or Datadog that test complete user workflows from multiple geographic locations and alert teams immediately when issues are detected, rather than relying solely on provider status pages.

Conclusion: Building a More Resilient Internet

The November 18, 2025 Cloudflare outage serves as a sobering reminder that the internet’s apparent reliability rests on surprisingly fragile foundations. As digital services become increasingly essential to every aspect of modern life, the consequences of infrastructure failures extend far beyond mere inconvenience.

The concentration of critical internet infrastructure in the hands of a few providers creates systemic risks that no individual organization can fully mitigate through their own actions. While multi-CDN strategies, sophisticated failover systems, and careful architecture can reduce vulnerability, they cannot eliminate dependence on shared infrastructure.

Addressing these structural challenges requires cooperation among technology companies, regulators, and the open-source community. Infrastructure providers must invest in resilience even when such investments don’t generate immediate revenue. Regulators must develop frameworks that encourage diversification without stifling innovation. Open-source developers must continue creating alternatives that reduce dependency on proprietary platforms.

For individual organizations, the path forward combines practical resilience engineering with realistic acceptance of remaining risks. No architecture eliminates all possibility of service disruption. The goal should be reducing both the frequency and impact of outages to acceptable levels while maintaining transparent communication with users when problems inevitably occur.

The clustering of major infrastructure outages in recent months suggests that the internet has entered a period of reckoning. The architectures that enabled unprecedented growth over the past two decades now strain under the weight of their own success. The next evolution of internet infrastructure must prioritize resilience alongside the performance and cost metrics that have traditionally driven technical decisions.

Whether the industry can accomplish this evolution voluntarily or whether regulatory intervention will prove necessary remains an open question. What seems certain is that the current state of affairs, where single provider failures can disable vast swaths of the internet within minutes, is not sustainable as digital infrastructure becomes ever more critical to economic activity and social functioning.

Organizations that treat the November 18 outage as a learning opportunity rather than an isolated incident will find themselves better prepared for the inevitable future failures that complex systems eventually produce. Those that maintain a posture of complacency, assuming that infrastructure providers will solve these problems without customer pressure, risk facing far more severe consequences when the next major outage occurs.

The internet’s original designers built a network architecture capable of routing around damage, with resilience and redundancy as core principles. As the internet has commercialized and consolidated, those principles have sometimes taken a backseat to efficiency and cost optimization. Perhaps the series of major outages serves a valuable purpose, reminding the technology industry that resilience matters and deserves sustained investment even when immediate financial returns remain unclear.

The path to a more resilient internet begins with acknowledging current vulnerabilities, learning from each failure, and systematically addressing single points of failure wherever they exist. The November 18, 2025 Cloudflare outage provided another data point in this ongoing process. The question is whether the industry will treat it as a wake-up call or simply another incident to move past once services return to normal.